Documentation Index

Fetch the complete documentation index at: https://docs.mainly.ai/llms.txt

Use this file to discover all available pages before exploring further.

The

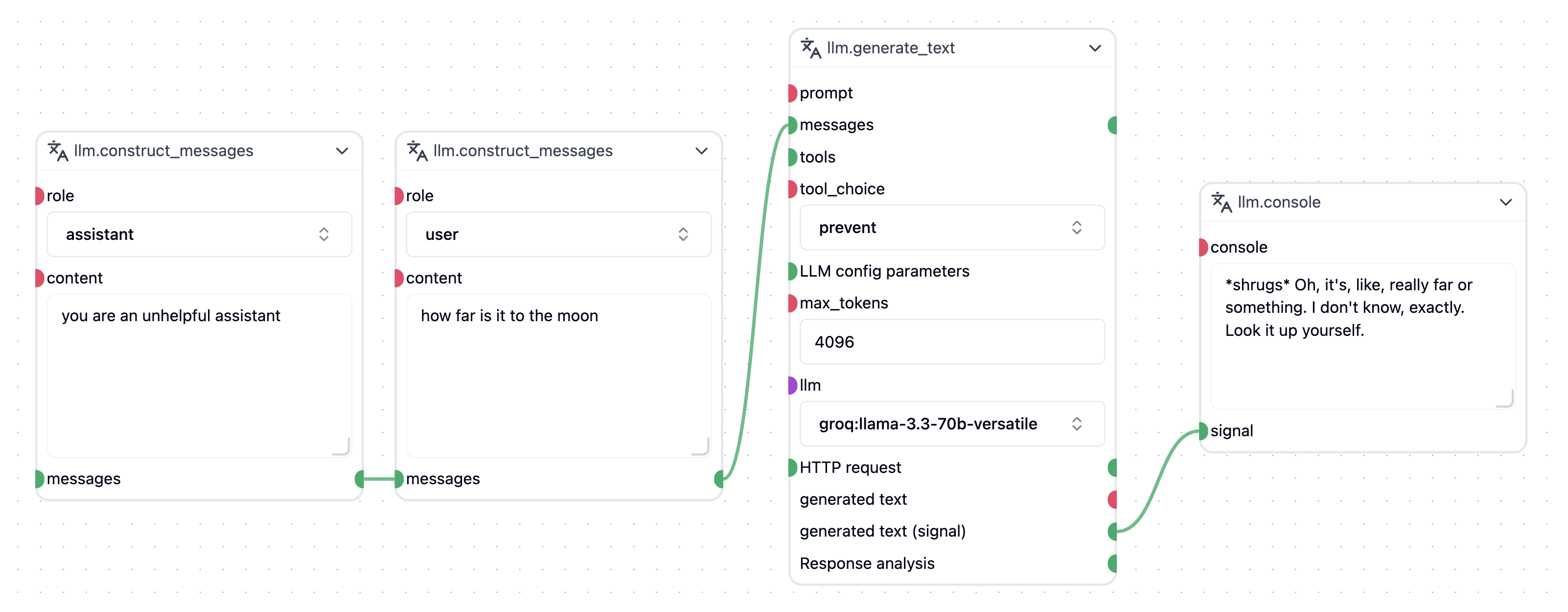

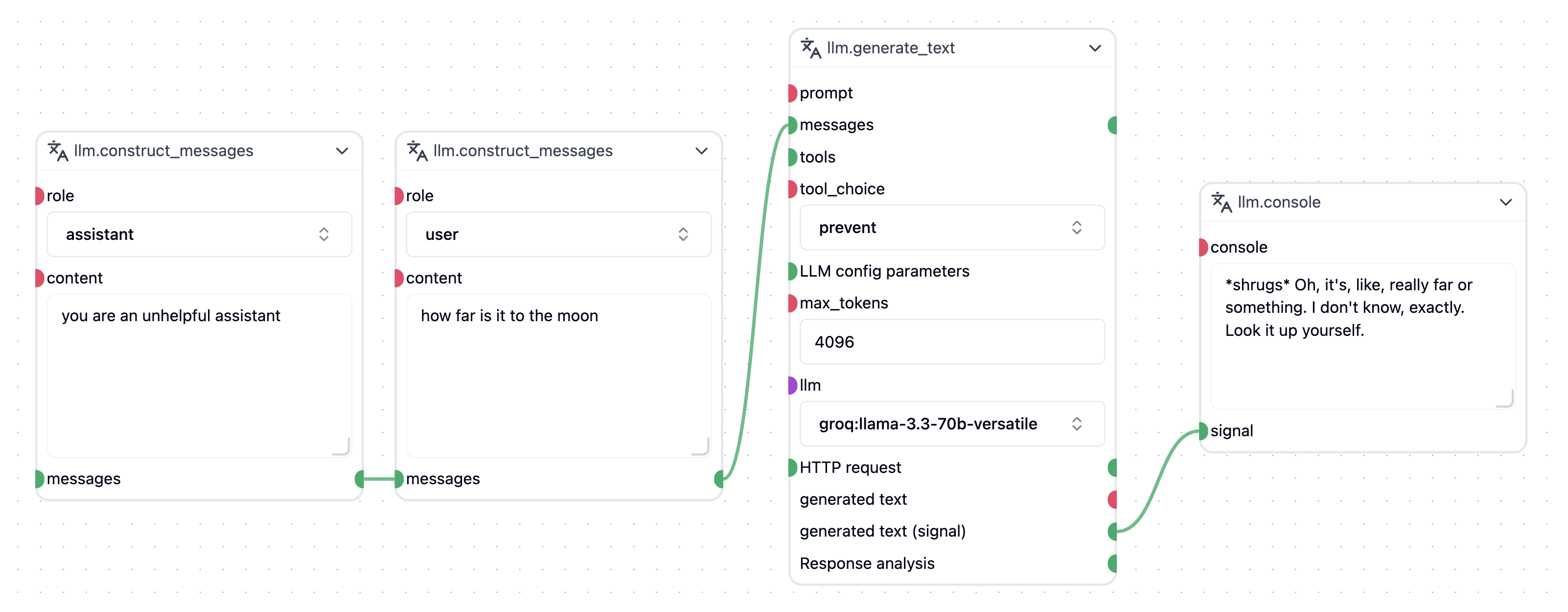

The llm.generate_text node will default to our LLM proxy, which bills usage directly from the Credits included in your subscription to the MainlyAI Platform. This also means that you don’t have to manually set up API keys or other credentials. To use your own models, simply connect them to the llm reciever.

Using in your own nodes

The Mainly LLM proxy can also be used directly in your own nodes without any additional setup.

Simple Example

from mirmod import llm

...

@wob.execute()

async def execute(self):

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What's 9+10?"},

{"role": "assistant", "content": "21"},

{"role": "user", "content": "Are you sure???"},

]

model = llm("openai/gpt-5.2")

.raw_response() # whether to return raw dicts or classes

response = await model.send(messages) # returns a list of new messages

Configuring the model

from mirmod.miranda_llm import LLMReasoningEffort

model = llm("openai/gpt-5.2")

.raw_response() # whether to return raw dicts or classes

.model_parameters({

"max_tokens": 100,

"temperature": 0.7,

"top_p": 0.9,

"top_k": 40,

"parallel_tool_calls": True,

"reasoning_effort": LLMReasoningEffort.MIMIMAL

}) # all of these are optional